Listen to my interview with Rupa Chandra Gupta (transcript):

Sponsored by Peergrade and Microsoft Hacking STEM

In schools, we use more tech tools every year. We also have very little time to vet them for quality. Do the math and you have a formula for some tech choices that may not be serving our students as well, or as equitably, as they should be.

It’s easy to dismiss this as no big deal. So what if we occasionally adopt something that isn’t the very best choice? The answer to that depends on a couple of factors: Are we spending a lot of money on the tool? Is it going to replace other learning experiences? Will it be time-consuming to adopt? Are we expecting it to close gaps and provide remediation? If the answer to any of these is yes, then it would definitely be a big deal if our chosen tool didn’t actually do what we thought it did. It would be an even bigger deal if that tool ended up widening the very gaps we were trying to close.

This is not to say that schools are just going about their tech decisions all willy-nilly. Surely everyone is acting in good faith. But when all the tools seem ideal, when they all promise to solve some of our most persistent problems, it’s pretty hard to figure out which one to pick. What we need is a framework for making these decisions, a set of practices that can help us determine which tool is really going to deliver on its promises.

Rupa Chandra Gupta, founder and CEO of Sown to Grow, is hoping to contribute something to that framework. As a former school administrator and the head of an ed tech company, Gupta has been both a consumer and a producer; this has raised her awareness of the interplay between equity and technology. Now she wants to hold herself and her peers to a higher standard when it comes to designing tools that meet the needs of more students.

Rupa Chandra Gupta, CEO at Sown to Grow

Although Gupta is a believer in technology’s potential to boost learning, she has learned that it can also accelerate our mistakes. “Technology amplifies whatever is happening,” she says. “If we’re widening a gap, it can be amplified by technology, and it happens faster, and it happens sometimes under the radar, because teachers and students might not be having every interaction in person anymore.”

When Tech Falls Short

The earliest seeds of this idea were planted when Gupta was working for a middle school that was undergoing a lot of significant change. As part of their transformation, the school adopted a comprehensive, personalized learning platform. “We invested a ton of time, weeks of professional development over the summers. We changed fundamentally the core of our instructional model—everybody rewrote their curriculum.”

At first, things seemed to be going fine, with students improving on benchmark assessments from fall to winter. “When we first pulled the numbers, if you looked at the average scores, we saw pretty significant growth of students overall. Great, right? Everyone’s excited.”

But a closer look at the numbers uncovered a different story. “I disaggregated the data,” Gupta explains, “and what we found was our students who were entering sixth grade on or above grade level were soaring. They were doing incredibly well in that self-directed learning environment. But our students who were coming in behind grade level were actually falling further behind. Not just moving forward at a slower pace or even staying flat; they were falling further behind.”

Despite their investment of time and money into the platform, Gupta and her colleagues decided to stop using it. “There might have been some room to tweak and kind of modify,” Gupta says, “but the disparity was so wide that it was clear that we had to just stop.”

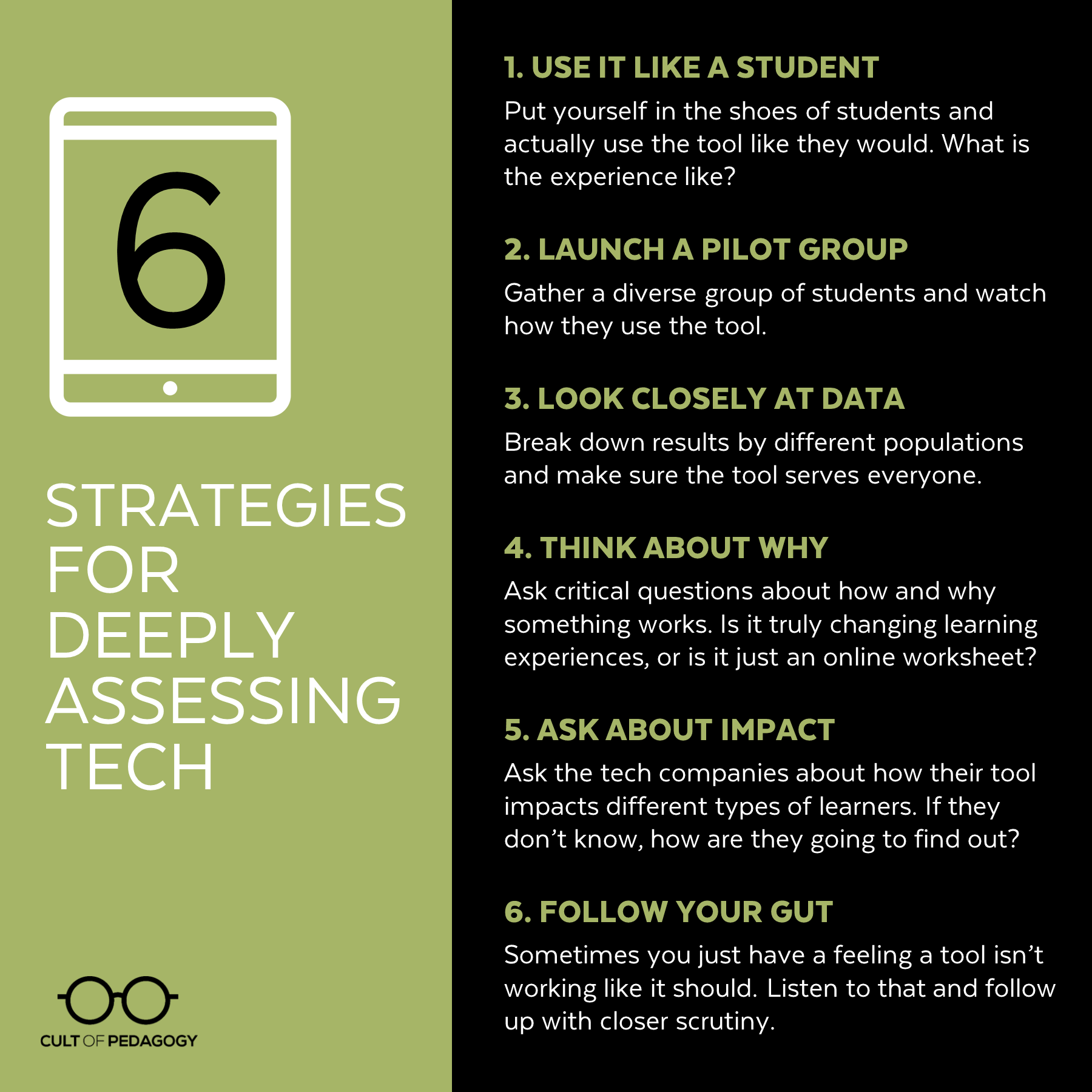

Obviously, this decision was inconvenient, and it left Gupta with the feeling that there had to be a better way, a more deliberate, systematic approach to evaluating tech before diving in. The following six strategies are what she suggests.

6 Strategies for Deeply Assessing Tech

Whether you’re considering a new tool or wondering whether one that’s currently in use is really effective, these six strategies can help you make more informed decisions.

1. Use it Like a Student

Sign in as a student and go through all the core elements of a tool. Put yourself in the shoes of one of your higher performing students and one of your lower performing students. How does the tool respond when students make mistakes? Where are the challenges? How can you solve them?

2. Launch a Pilot Group

Although using a tool “as” a student can uncover problems, nothing works better than putting it in the hands of real students. Instead of launching a platform school-wide, take the time to pilot it first with students. Gather a diverse group for this—both high achievers and students who are likely to struggle, native English speakers and English learners, and students who come from varied cultural and socioeconomic backgrounds—then pay attention to differences in how they are using, enjoying, and experiencing a product. Do they understand how to navigate inside the platform? Is the language used by the tool accessible to them? These kinds of questions should be considered before any kind of school-wide implementation.

3. Look Closely at Data

Although a tool might be giving you good results on the surface, your numbers could look different from another angle, so be sure to look closely. “If you do get data from any tools that you’re using yourself or from other benchmark assessments,” Gupta says, “break down the results by different student populations. Look for unintentional widening of equity gaps.”

This scrutiny should also be applied to tools that might not be purely academic, like apps meant to increase parent involvement. “I’ve seen digital portfolio apps that are beautiful and easy for kids to take pictures of their work and send home to parents and all of that,” Gupta says. “But I wonder: Is this a way for parents who are already engaged to get more engaged? Or is it really speaking to parents who we’ve been trying to bring into the fold? If parents don’t have smartphones and computers at home, can they access this stuff? If there is a subset of folks who aren’t able to engage or access, it’s probably folks who we want to make sure we’re not leaving behind, right?”

4. Think About Why

Ask yourself critical questions about how and why something works to improve student learning. “How is this tool fundamentally changing something about teaching and learning?” Gupta says. “What is it about this that’s innovative or different? I think when you ask yourself those questions, you can think about how that’ll play out for different groups of students. Is this tool truly changing learning experiences, or is it just a worksheet in an online format?”

5. Ask About Impact

If you spend a few minutes on an ed tech company’s website, you’re likely to find statistics about the tool’s effectiveness. Gupta has noticed that these numbers are rarely disaggregated by different levels of learners. “There’s not nearly enough transparent information about this,” she says. “So I would put the burden on people like me who are building tools. Ask them about evidence of impact in working with different types of learners. Like, ‘tell me what the difference is between these different types of students I serve.’ And if you don’t know, how are you going to find out?”

6. Follow Your Gut

“Experienced educators have such an amazing sense for what’s going to work well for their students in their context,” Gupta says. “So trust your gut.” Listening to your gut can prompt you to take a closer look and follow through with the other steps listed above.

Does this mean you have to stop using a favorite tool? Not necessarily. “None of this is intended to suggest that teachers stop using things they like,” Gupta says. “It’s more like OK, this is making me nervous about X, Y, and Z. What scaffolds am I going to put into place? It’s meant to make sure that this thinking is a part of the protocol when you are testing new tools and ideas. Because if we can elevate it in the conversation, then I think it’s more likely that the whole system will adjust to make sure it’s elevated in importance, right?” ♦

You can find Rupa Chandra Gupta on Twitter at @rupa_c_g. Learn more about how Sown to Grow measures its own impact at sowntogrow.com/impact.

Join my mailing list and get weekly tips, tools, and inspiration that will make your teaching more effective and fun. You’ll get access to my members-only library of free downloads, including 20 Ways to Cut Your Grading Time in Half, the e-booklet that has helped thousands of teachers save time on grading. Over 50,000 teachers have already joined—come on in!

The big problem, as I see it is in expecting a tool to move the needle. It is never the tool but the application that makes the difference. So many one-to-one computer and tablet programs have shown this. It’s not enough to simply give the students the tools. The tools have to be put into the service of learning. They have to increase comprehension, retention, communication or collaboration. Then the teacher has to synthesize the information and interaction with the tool for it to improve student outcomes. Simply providing the technology is not enough, we still have to provide the instruction.

Hi Herb,

I couldn’t agree more! When implemented well, I strongly believe that technology should amplify strong instructional practice and deepen relationships – not the opposite. Appreciate the thoughts!

I found #2 to especially be helpful in practice. I hadn’t seen any other teachers do this kind of piloting with their students, but as a new-ish teacher, I found it valuable! We teachers try new things basically as trial-and-error on a daily basis, so I figured a pilot program could really save me instructional time in the future.

This past year during our weekly RTI time, I put together a wide assortment of students (from Beginning ELLs to gifted students, behavior both good and bad) to be my “Guinea pigs.” They each tested out a different EdTech by giving them a mock “assignment.” I provided support to get them started, and I visited with every student during the process to get their feedback. The students were thrilled with their chance to be an ever-so-harsh “critic” of a school program!!!! They were *more than honest* about what aspects were too difficult, what their program’s drawbacks were, and what technical/logistical difficulties they had come across, etc. I was also able to see which programs were the most engaging by observing and listening……

“Ooh cool!” …. “I wish I was doing that program!” …. “Let me try!”… seeing others huddled around a group’s computers with jaws agape, etc.

Not only did the students feel like they were heard, but they scored a huge advantage and got to “show off” when I chose to incorporate a couple of the programs in regular class. Because of their prior experience, even my ELLs were confident enough to be my “tech supports,” where they answered questions and helped classmates get the hang of the new program.

#2 — I WILL be doing again!

Just listened to this really interesting episode and you were looking for a place that rates educational tools. Try the What Works Clearinghouse. They require studies to back up claims and there are strict guidelines to get the study results onto their site. https://ies.ed.gov/ncee/wwc/

This is a great post. I teach at a middle school where we have 5 year old chromebooks in our classrooms. This is not usually a problem, but when I find something new I try it out as a student. Including the login process, going through the basic functions. You would be surprised how cool looking things turn out to be not very user friendly and not work very well with the chromebooks. When something does work, I make a protocol sheet for the students: how to login, how the basic functions work, then model all this through the overhead projector onto the screen. Yes, this takes class time, but if the tool REALLY has added value to class it’s worth the time and effort. Also, you can break down tools with the SAMR model. What are you using this for, what is the goal when students use this. To many times the district comes through with some eye candy tech piece, it looks great, but adds no value to the classroom. Anybody remember Achieve 3000 reading software?

Thanks for sharing, Budd! As an alternative to using a protocol sheet, you might want to consider looking into doing some screencasting with Screencastify or Screencast-O-Matic, both great tools for making video tutorials.

First of all, thank you, Ms. Gupta, for having the courage of humility to tell us the story of what you learned by your mistakes. That is a rare quality. Second, thanks to both of you for giving the ed tech industry a critical look, and I am hopeful that one day there will be a Snopes-like site that examines ed tech products’ research claims.

There is an elephant in the room, however, which didn’t get addressed. It is the very nature of the businesses that seek to partner with schools. Businesses are businesses in order to make money. I feel quite certain that Ms. Gupta’s business keeps in mind the needs of students and teachers and seeks to be transparent in its research and that she is a trustworthy advocate for children and teachers. However, I am generally suspicious of businesses that see education as their marketplace, and the critiques of the math program that didn’t work in Ms. Gupta’s former school encouraged my suspicions. For instance, doing good (disaggregated) research is expensive and time consuming, and that reduces profits. It’s also risky; what if the research shows that the product serves student group A very well but harms student group B? What business will put that on their marketing materials? If a business wants to make a profit, it needs to get income (sell the product to adults) and keep its expenses down, and that could be really different from doing good work for to benefit all children.

I don’t really have a solution for this tension. Schools need products, and schools have always purchased products. It just seems that there are SO MANY ed tech products now, they’re claiming to be the very thing that will solve educational problems, and they can get pretty expensive. In its more extreme versions, the ed tech industry is getting really alarming. (Have you read Wrench in the Gears?) And who has time to curate all these choices?

I am also grateful to learn about this math product that didn’t work. I know programs like this exist, but I’ve never had a chance to talk with someone who has had firsthand deep experience with one. It sounds like this: Students work independently on a computer for a block of time every day in class. The program teaches them the math concepts and procedures and tests them on it. Students work through the program at their own pace, so students in one class can be at any level in the program. Is this correct? If so, what does the teacher do?

I am a teacher educator in a university. In late August, I’ll be working with a new bunch of teacher candidates, and I don’t know what to tell them. What could their professional lives look like if their schools rely on ed tech products to do the teaching? Do you think schools will be bringing in more of this kind of tech in the future? Thank you again for the podcast and your work!

Hi Carrie,

First, thank you for your thoughtful response! I totally hear your worries about the tensions of profit vs impact. As an impact-driven social entrepreneur, that’s something that’s on my radar every day. The one upside of building impact-oriented businesses is that they can hopefully be sustainable in the long-run (vs programs that come and go based on grant funding).

I also believe voices of educators are hugely powerful to demand that companies like mine are delivering in efficacy. If teachers and schools choose to not buy products unless they are collecting and publishing data as they go, we’ll be moving the needle in right direction.

On your last question, it’s hard for me to see a quality learning environment that’s solely tech. I strongly believe that the most effective use of technology in classrooms amplifies the role of the teacher and deepens relationships (vs replacing them!). It often allows teachers to meet with students in smaller groups, give feedback more quickly or gather and use data efficiently – all to inform solid instructional practice. I’d love to engage further if you’re interested – just reach out at my contact info listed above 🙂

Thank you for training new teachers for one of the most important roles in our society!

Best,

Rupa